DR-CircuitGNN: Training Acceleration of Heterogeneous Circuit Graph Neural Network on GPUs

Published in ACM International Conference on Supercomputing (ICS), 2025

Abstract

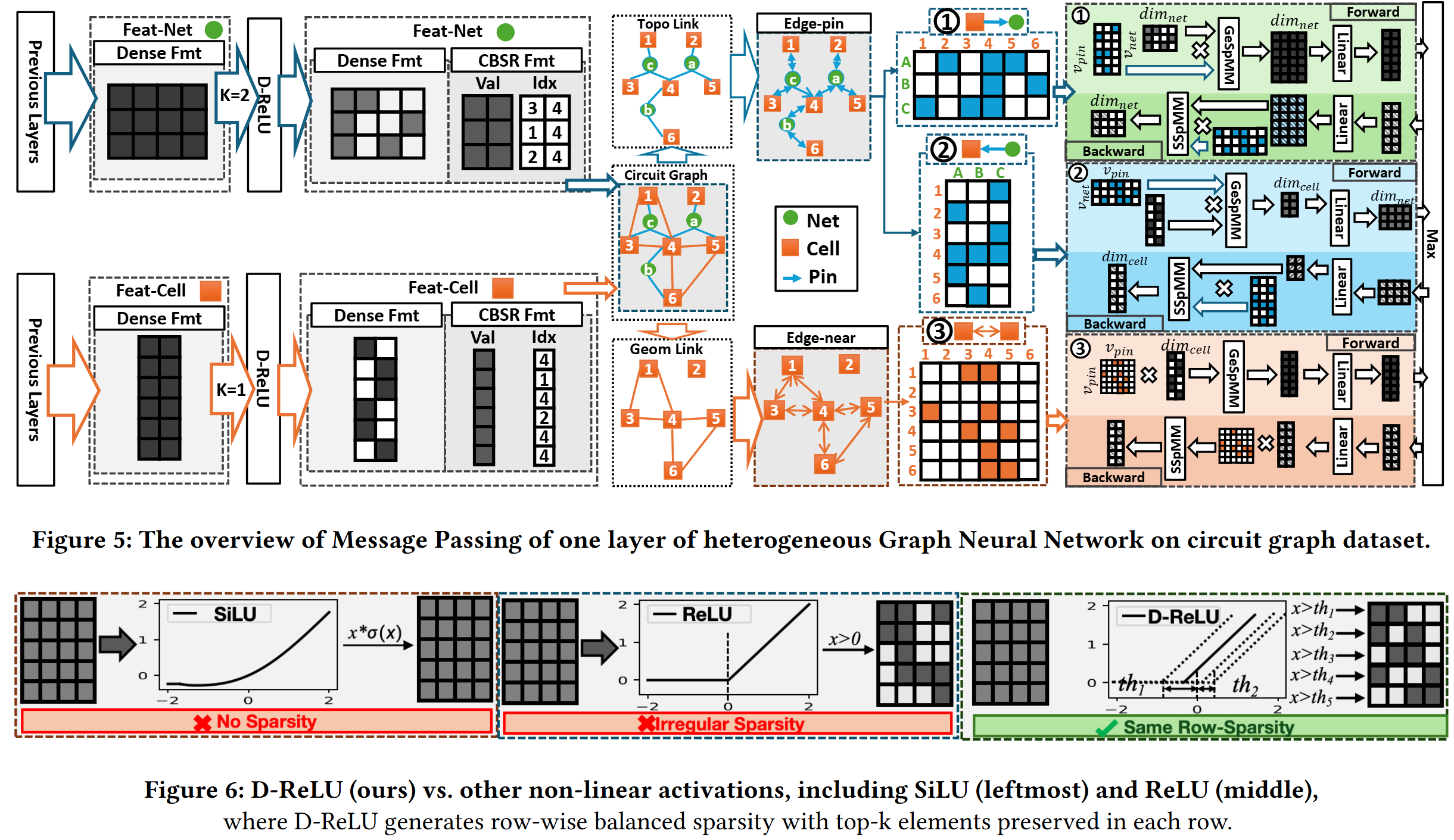

We present DR-CircuitGNN, a GPU-accelerated training framework for heterogeneous circuit graph neural networks. By exploiting data reuse patterns unique to circuit graph structures and designing GPU-optimized computation kernels, our approach achieves substantial training speedup while maintaining model accuracy.

Key Contributions

- Novel data reuse strategies tailored for heterogeneous circuit graph structures

- GPU-optimized kernels for circuit GNN training acceleration

- Comprehensive evaluation on real-world EDA circuit benchmarks

Authors

Yuebo Luo, Shiyang Li, Junran Tao, Kiran Gautam Thorat, Xi Xie, Hongwu Peng, Nuo Xu, Caiwen Ding, Shaoyi Huang

In Proceedings of the 39th ACM International Conference on Supercomputing (ICS ‘25), June 8-11, 2025, Salt Lake City, USA.

Recommended citation: Y. Luo, S. Li, J. Tao, K. G. Thorat, X. Xie, H. Peng, N. Xu, C. Ding, S. Huang. "DR-CircuitGNN: Training Acceleration of Heterogeneous Circuit Graph Neural Network on GPUs." In Proceedings of the 39th ACM International Conference on Supercomputing (ICS '25), 2025.

Download Paper